CHAPTER 66 Clinical Quality and Safety in Cardiac Surgery

At the turn of the 20th century, Dr. Ernest A. Codman (1869-1940) was rejected by the Boston medical community for his maverick ideas supporting the evaluation and publication of surgical outcomes. Codman eventually founded his own hospital dedicated to the study of “end results” and published his outcomes.1 His work was seminal in the establishment of the American College of Surgeons and the Joint Commission. A half-century later, the Boston medical community redeemed itself with the landmark report by Beecher and Todd, “A Study of the Deaths Associated with Anesthesia and Surgery.”2 Death was the designated outcome, as it was the one that could be agreed on by all investigators.3 Two decades later, the focus shifted to the application of the critical incident technique and identification of remediable factors that contribute to anesthesia-related morbidity and mortality, and the eventual creation of the Anesthesia Patient Safety Foundation.4–6

In the spirit of Codman’s focus on end results and the Anesthesia Patient Safety Foundation’s focus on remediation, cardiac surgeons have played a major role in the study of outcomes and the implementation of quality improvement techniques. The relative uniformity and limited types of cardiac operations, together with their case volume, aggregate cost, and high public profile, impelled interested stakeholders to quantify outcomes. In 1972, the Department of Veterans Affairs (VA) created a Cardiac Surgery Consultants Committee Advisory Group, which resulted in the first multi-institutional cardiac surgery outcomes database. Until 1988, the main outcomes were volume and unadjusted mortality.7 In 1987, the Health Care Financing Administration (HCFA) published raw institution-specific mortality rates for Medicare patients.8 Mortality rates were generated for entire hospitals in aggregate, as well as for specific diagnosis-related groups (DRGs), including coronary artery bypass grafting (CABG). With growing concern over the confusion developing from raw mortality rates, the VA introduced a risk-adjustment model and created what is now known as the VA Continuous Improvement in Cardiac Surgery Program.9 Motivated by both clinical and statistical imperatives, in 1989 the Society of Thoracic Surgeons (STS) developed its own voluntary, risk-adjusted database for cardiac surgery. Also in 1989, Parsonnet and colleagues pioneered a predictive model that classified patients into five groups of increasing operative risk according to 14 preoperative risk factors.10 The model proved to be highly predictive when applied to a large number of patients in three hospitals. The 1990s saw the development of various statistical methods to adjust for preoperative risk hazard as well as social and geographic differences.11,12 In 1987 in New England, a consortium of hospitals, the Northern New England Cardiovascular Disease Study Group, began to collect data uniformly in a common registry.13 The Alabama Coronary Artery Bypass Grafting Cooperative project gathered data beginning in 1995.14 However, among these and other registries established in the early and mid-1990s, the universal finding was that operative mortality varied widely among institutions, even after risk adjustment.14–16 This observation presaged complementary movements: (1) public reporting of statewide outcomes and (2) strategic interventions to improve outcomes. Hannan and coworkers’ studies of CABG mortality in New York State led to the first statewide reporting of operative mortality.17,18 Statutory requirement for public reporting was subsequently adopted in Pennsylvania, New Jersey, and Massachusetts.19–21

An equally important result has been the cooperative analysis of outcomes with a focus on performance improvement. The Northern New England Cardiovascular Disease Study Group became a leader in this movement, in which surgeons, cardiologists, anesthesiologists, nurses, and perfusionists collaborated to review data and current practice, target key variables that drive outcomes, and organize improvement projects, such as inter-institutional site visits and study protocols, with resultant decline in CABG mortality.15 A number of regional, national, and even international groups have followed this registry and quality improvement model, establishing benchmarks for the cardiac surgical “industry.”22–24

Around the turn of the 21st century, national specialty societies began establishing guidelines for the application of interventional and surgical technologies. The American College of Cardiology (ACC) and American Heart Association (AHA) published their first Guidelines for Coronary Bypass Surgery in 1999, with an update in 2005.25,26 These include class I, useful and effective; class IIa, evidence favors usefulness; class IIb, evidence less well established; class III, not useful or effective, and in some cases harmful. Similar guidelines have been established for valvular surgery.27,28

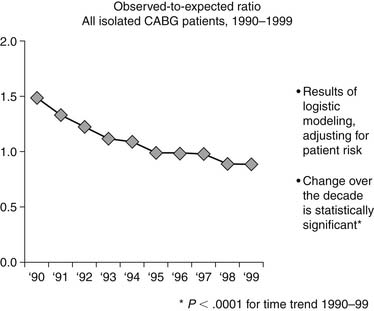

The net impact of all these activities is dramatically apparent when viewing the decline in the ratio of observed (O) to expected (E) CABG mortality of patients, as measured by the STS database. In 1990 the O-to-E ratio was 1.49, and by 1999 it was 0.88, P < .0001 (Fig. 66-1).29

NATIONAL LANDSCAPE FOR QUALITY AND SAFETY

In addition to quality and safety initiatives specific to cardiac surgery, there has been a major cultural change nationally over the past decade. Leadership in the prioritization of quality and safety has come from the Institute of Medicine (IOM). In a groundbreaking publication in 1999, “To Err Is Human,” the IOM made several important contributions.30 First, the report estimated that up to 98,000 patients per year may have died as a result of medical error. They identified that errors are often caused by faulty systems, processes, and conditions. They cited the work of Lucian Leape and colleagues, who codified four types of errors: diagnostic, treatment, preventive, and other (Box 6-1).31

Box 66–1 Types of Medical Errors

Adapted from Leape L, Lawthers AG, Brennan TA, et al. Preventing medical injury. Qual Rev Bull 1993;19:144-9.

To achieve a better safety record, the IOM report recommended a four-tiered approach:

Two years later, the IOM published another landmark report, “Crossing the Quality Chasm,”32 which set a national agenda aimed at narrowing differences in quality among providers of medical care. Instead of attention to a single outcome, the IOM focused on the quality of the entire patient experience, defining it in six key dimensions as being “safe, effective, efficient, timely, patient-centered, and equitable.” The model promulgated a balanced approach to assessment of quality, incorporating clinical outcomes with patient experience and the appropriate allocation of resources. Furthermore, the report identified key redesign imperatives for care delivery:

These two IOM reports raised the bar to a new level of excellence that transcended the expertise of a single care provider. In 2006, a third IOM report, “Performance Measurement: Accelerating Improvement,” laid the groundwork for performance measurement.33 Achieving excellence now required a careful orchestration of care delivery, incorporating evidence-based care processes, as well as coordinated care infrastructure across the care delivery continuum—both inpatient and outpatient. Hardwiring for excellence became a priority, leading the Joint Commission (formerly known as JCAHO) to begin requiring demonstration of evidence-based and safe practices in the delivery of care. Payers and purchasers followed suit. The Centers for Medicare and Medicaid Services (CMS) instituted the requirement for submission of “core measures,” evidence-based practices in the care of patients with acute myocardial infarction, heart failure, pneumonia, and selected surgeries (including cardiac surgery).

An outcome of these various national initiatives is the standardization of surgical care. Postoperative complications have a significant impact on mortality, length of stay (3 to 11 days), and cost.34 Increased costs of complications are estimated to be $1398 per patient for infectious complications, $7789 per patient for cardiovascular complications, $52,466 per patient for respiratory complications, and $1810 per patient for thromboembolic complications.35 In 2002, CMS, in collaboration with the Centers for Disease Control and Prevention, implemented the National Surgical Infection Prevention Project with the goal of decreasing the morbidity and mortality associated with postoperative surgical site infections by promoting appropriate selection and timing of prophylactic antimicrobials.36 In April 2003, this group joined with representatives of the VA, the American College of Surgeons, the American Society of Anesthesiologists, the Agency for Healthcare Research and Quality, the American Hospital Association, and the Institute for Healthcare Improvement, to align efforts to reduce surgical complications and mortality. This collaboration resulted in the development of the Surgical Care Improvement Project (SCIP), a national quality partnership of organizations committed to improving the safety of surgical care through the reduction of postoperative complications.36 The SCIP steering committee established a national goal of reducing preventable surgical morbidity and mortality by 25% by 2010. The rapid rate of adoption of the SCIP measures can be tracked on the CMS website Hospital Compare (www.hospitalcompare.hhs.gov), showing percent compliance with these measures in hospitals across the country.

EVOLVING LANDSCAPE OF QUALITY AND SAFETY IN CARDIAC SURGERY

From 1989 to 2007, the STS database grew to become the largest and most comprehensive single-specialty clinical database in health care in the world.37 In view of the increasing interest of payers and regulators to compare cardiac surgery quality, the STS established a Quality Measurement Task Force (QMTF). Their goal was to develop a methodology for comprehensive assessment of adult cardiac surgery quality of care. The assessment was to include both individual measures and a composite quality score. Guiding principles included the following38:

< div class='tao-gold-member'>

Stay updated, free articles. Join our Telegram channel

Full access? Get Clinical Tree